This past weekend I was lucky enough to return to News Foo 2012. Elise, Derek*, Molly, Adriano, and Greg have all given excellent recaps, so I won’t try to duplicate those. Instead, I’ll talk about how I changed after attending News Foo 2011 and what I’ve learned since then.

First, News Foo is an event unlike any other I’ve ever attended. There have been a few experiences in my life that stand out for altering my outlook on what is achievable or for teaching me courage or grace. Bike racing, parenting, and marriage have each yielded a few of these and triggered irreversible personal growth. News Foo was the only thing remotely related to my media career that’s yielded that kind of perspective.

News Foo is a sort of intellectual Burning Man for those who are passionate about news and technology. It’s an intense experience lived over a very short period of time, where you will be exposed to more ideas and more incredible people than you can possibly absorb in a few years, let alone two days. It’s a hand-picked, curated crowd chosen to send each other sky-high in the hopes that we’ll return home to tackle big problems with renewed vigor, new perspective, and with each other’s help.

While I typically attend public unconferences, the private invite-only setting and FrieNDA ethic of News Foo offers a level of intimacy and openness I’ve never seen anywhere else. Campers range from up-and-comers to outright luminaries in fields that include media, technology, science, art, business, and academia. They’re also precisely the kind of individuals who want to approach old intractable problems with new optimism and creativity. John Bracken only half-jokingly quipped: “If this room exploded the Internet would be set back 10 years.” For most attendees, it is impossible to leave Phoenix uninspired. **

My first News Foo was an amazing blur. I did not heed the organizers’ advice and arrived at News Foo 2011 poorly rested. I was both overwhelmed and star-struck to be among so many people I’d admired from afar for so long. I gave an Ignite talk on reinventing audio. I participated in sessions ranging from remaking newsrooms to “Let’s invent the worst startup imaginable.” (One of the finalists was “Chew’d”, a food-truck franchise that serves — wait for it — pre-chewed food.) We got off campus for a hike and a trip to the botanical garden. I played an obscene amount of Werewolf that culminated in a total mind-screw.

I came to News Foo 2012 much more relaxed. I actually slept the week prior and did not commit to an Ignite this year (i.e. I was able to drink beers and relax). Sean Bonner, Nadav Aharony, John Keefe, Alex Howard, and I led a Saturday morning panel on sensors. A Saturday session on “Engineering Serendipity” got meta when session leader Ethan Zuckerman remarked that the News Foo attendee list was itself curated in favor of serendipity. Brian Fitzpatrick added that some individuals would never choose to attend such an event, further curating the experience. I took Sara’s advice and went to more panels that sounded offbeat and interesting. I commiserated with other fellow type-A’s trying to unplug and take a vacation and also shared “FAIL” stories with some incredible folks. We took a walk around Phoenix. A brief conversation with Jenny Lee and David Cohn turned into my personal self-help session. Even at News Foo, the best conversations often happen outside of sessions, and yes – at Werewolf. So of course, I played an obscene amount of Werewolf that culminated in a total mind-screw.

And what about the time in-between my first and second News Foos? One of my takeaways from News Foo 2011 was that I needed to share more. I did that this year both person-to-person and at events. While I survived my first Ignite talk, I found it to be such a valuable exercise that I decided to do more. I gave a talk on sensors for news at the TechRaking conference at Google and another humorous one on fashion, news and cognitive bias. It takes me upwards of 30 hours to prepare a five minute Ignite-style talk (generically a “lightning talk”), but the time and format constraints force you to be concise and clear while delivering a strong narrative arc. It’s something that’s greatly helped me communicate complicated ideas to diverse audiences. Those are skills I need to perfect to create the kind of impact I expect.

The inspiration is certainly powerful, but the most enduring gift of News Foo is the network. I’m not exactly a shrinking violet, and I have no shortage of people in my personal network. But this year, I chose to make Foo friends my primary network, and that has had a terrific impact on every action taken and every decision I’ve made since.

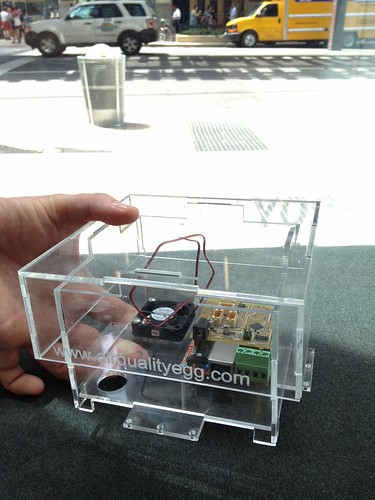

This year I’ve introduced campers to friends and professional acquaintances, helped them seal a few business deals, and called on them for advice. If you were in town for a few hours, I figured out how to get away from the office and grab coffee. Just yesterday I had lunch with Dan Oshinsky before he moves to NYC to join Buzz Feed. The Chicago Air Quality Egg Hackshop wouldn’t have happened last summer if I hadn’t met Fitz at News Foo, become friends, attended his ORDcamp, and then introduced his incredible Chicago community to Joe and Ed, the NYC-based leaders of the Egg project. Networks matter. If I met you at a camp, I immediately advanced you to “old friend” status. And why wouldn’t I, since we just endured a marathon of bliss together and we’re all so passionate about solving the same difficult problems? Whatever I put into this community, I get so much more in return.

News media is in the business of solving big societal information problems. We’re strapped in every way imaginable, and to be able to tap the opinions of exceptional friends has changed the way I plan long-term and the way I work day-to-day.

Was it hyperbole last year when I called News Foo “life-changing?” No, I think I just explained how I have indeed changed and why I’m better for it. There are so many wonderful people I’ve shared and interacted with this year, but I want to specifically thank Sara Winge, Richard Gingras, John Bracken, Tim O’Reilly, and Jenny 8 Lee for organizing the event.

It’s been a few days since I returned from News Foo 2012. I’m recovering and still supercharged, though I realize that the inevitable post-Foo withdrawal will soon arrive. I’ve been fortunate enough to attend two years in a row, which likely means it won’t be my turn again for some time. I’ll still be chasing crazy ideas and still be on the network ready to motivate and assist. Look for me, and don’t be shy about reaching out.

* Derek’s piece was excellent, and my only potential point of disagreement is that this perceived lack of ideological diversity has in it’s roots some very partisan stances that have no place at any open event that looks to promote understanding and tolerance. News Foo is not an inherently partisan event, and the majority of journalist attendees exemplify the non-partisan stances of their profession. The majority of non-journalism attendees show the same disdain for politics that most of the country does. That said, News Foo and its attendees embrace ethnic diversity, advancement of science, gender equality, and sexual orientation equality. These are non-partisan values that unfortunately have been politicized and rejected by one of the major political parties. The only esteemed News Foo value where both parties share an equally abysmal record is the Open Internet. I look forward to a time when the agreed upon starting point is that diversity, science, and equality are good things, and then we disagree on how to get there most quickly.

** Both in 2011 and 2012 I met a few campers who don’t see a path forward and were probably invited because they are in a position of responsibility and everyone hopes their internal switches will flip. It’s the job of everyone else to inspire them and convince them to shake things up. Unfortunately, it doesn’t always work.